Why the Module?

This module aims to remove the illusion that AI is external and separate from us and therefore uncontrollable. We also try to move away from the idea of just ethical AI, as nobody is against ethics. We move into social AI, and what kind of political and social choices that it requires.

What will it cover?

- What is AI for the social good

- AI as a mirror

- AI does not stand apart from humans

You might feel a bit overwhelmed about all this AI stuff. Even if you were to have a firm grasp on the fundamentals (by looking at our other modules!), the natural question that comes up is: what can I do? The truth is that there is no easy answer, but we will provide you some guidance nonetheless.

Let’s start by thinking through who you are. AI is like a mirror, it reflects the data that we feed it, but it also raises important questions on what you think is important and what should be allowed. What does it mean to use AI for good?

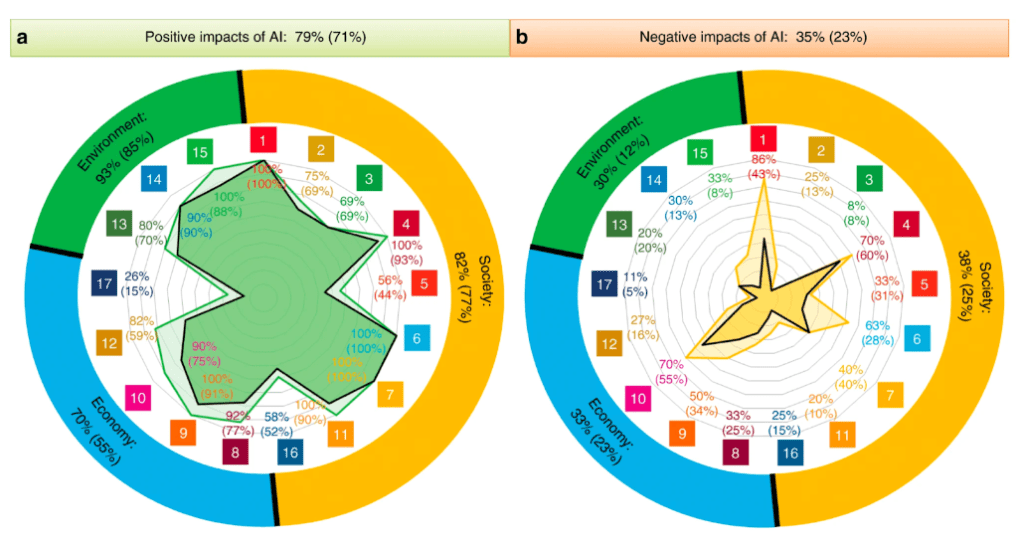

One starting point is to look at the Sustainable Development Goals (SDGs), where researchers found that AI has a positive impact on goals like reducing hunger, providing access to clean energy, and zero poverty. At the same time AI is likely to increase inequality. Do you think that is worth it?

Study Room

This study room invites reflections on what AI for good actually means. It considers what types of issues AI could solve, as well as reflects on how tradeoffs may emerge on solving certain issues. They offer clear examples of how AI can be used to make a real world impact, and are also very obvious problems that nobody can object to. To that extent they reflects the moral lowest common denominator for AI for Good. The first source is a Dutch organization that uses AI to make real life impacts on things like and other obvious positive use cases. The second source is about the SDG goals and how AI impacts them either positively or negatively.

Check out how a group in The Netherlands is using AI to make real life impacts on things like oil spills and panda preservation.

To know more about AI for good, and its implications on the Sustainable Development Goals, explore the following platform which has resources, examples, and projects that you can study.

Can we however think critically about what AI for good really means? In “Moral Machine” an online platform developed by researchers at the Massachusetts Institute of Technology (MIT), users are presented with moral dilemmas involving autonomous vehicles. Users are prompted to make ethical decisions regarding hypothetical scenarios where autonomous vehicles must choose between different courses of action, raising important questions about ethics, technology, and decision-making in AI-driven systems.

Activity Room

Have you ever rated somebody on Uber, or on a food delivery app? The next time you do so, ask the person what the implications of those ratings can be? Discuss with them about why ratings matter, and then reflect on your own choices and if you would rate anyone differently.

Watch Nosedive, an episode on Black Mirror, a British Science Fiction Anthology, and reflect on the role rating play.

If you would like to read more about ratings, here are a few resources that you can explore:

The Performativity of Ratings in Platform Work

In the Gig Economy, Customers are Complicit in Labor Exploitation

Reflection Room

Who is currently involved in developing AI, and who should be?

Do you think that you could avoid using AI altogether?

Can you imagine a future that is AI free?

Related Modules

Other Innovator Modules