Why the Module?

This module is to introduce you to the “political” side of technology. This is particularly relevant in a domain and sector-neutral type of technology like AI which means it can be across aspects such as education, health, law, finance. Through this awareness, we hope the module can increase awareness and agency to participate and promote the democratic development of AI.

What will it cover?

- External (societal) views on AI

- Economic perspectives on AI

- Understanding of the political economy of AI

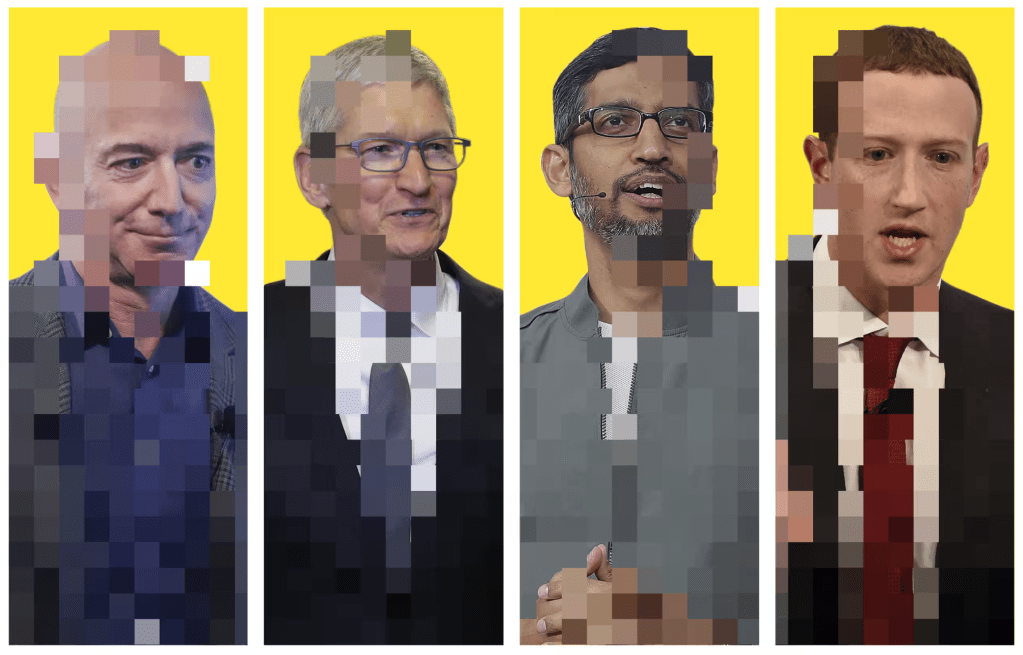

- Awareness of the size and scope of big tech companies

- Conceptualization of digital power

Whenever you think about AI remember that at the end of the day all AI was built by humans! This makes it especially important to think about the kinds of people that are developing AI. Are they in it for money? Are they doing it out of scientific curiosity? Are they in it for power or to control others?

How we develop technology is not neutral. These are reflections of the society around us. We see differences on the basis of race or gender, and these can translate into how we use technology. As technology is developed, there are political choices that exist in society, and we must take great care to be aware of those, and ensure that they do not lead to further imbalances in society.

The organizations most associated with AI are Big Tech, a group of mostly American companies that have the money and resources to develop AI. Good to know that the cost of building a ChatGPT like model is estimated around $ 500m, that’s a five with eight zeros behind it! With so much money being invested it’s no wonder that nobody is developing AI for free…

Study Room

The goal of this study room is to enhance an understanding of what is beyond AI, how it is developed, how it is sustained, and where it may go towards.The articles give clear insight into the size of Big Tech and their source of power. They also highlight the centrality of Big Tech within the political economy of AI and how there are no realistic ways of thinking about AI development and deployment that does not directly involve them.

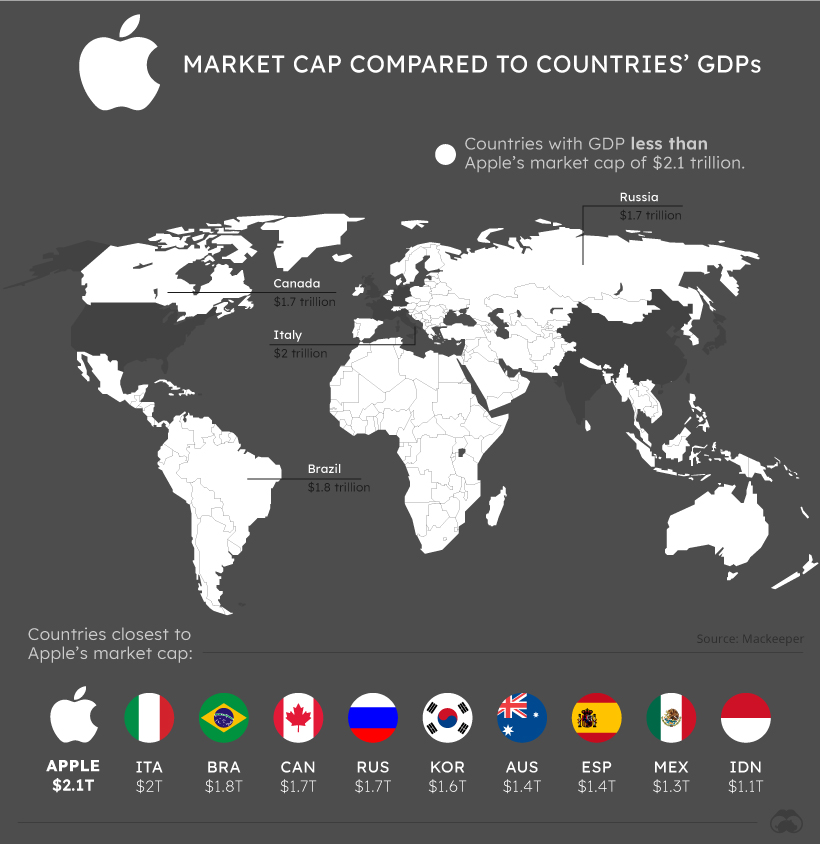

This article discusses how Big Tech companies are sometimes equivalent to large national economies in scope. It gives you a sense of the economic scale and power, and why they have the capacity and influence to shape the world around us.

If you would like to know more, this blog post from the International Monetary Fund discusses the potential implications of AI on global economic inequality. It explores how AI technologies could exacerbate disparities between wealthy and impoverished nations if not properly managed, offering insights into the socioeconomic challenges posed by AI adoption.

In this article, we are introduced to how policy makers have attempted (and failed) to rein in big tech.

Take a look at the following video, which explores that there is real power in Big Tech companies, and that within the digital realm they are effectively unchallenged

Big Tech firms like Google, Facebook, and Amazon wield significant control over AI research, development, and deployment. Check out the following piece in the MIT Technology Review which explores concerns about the concentration of power and the implications for innovation and competition in the AI landscape.

As a counter resource, the work of the Indigenous Protocol and AI working group develops new conceptual and practical approaches to building the next generation of AI systems.

Activity Room

How often do you Google something? What do you tell Google, and what does it know about you? Check out this resource from Tactical Tech on Google Society.

Could you think about how many Amazon products you carry on your phone? Or the role Microsoft plays in your life? What about other companies like Meta, Alibaba or Reliance?

Get together in a group of your family, friends, classmates, and/or co-workers and carry out the following activity.

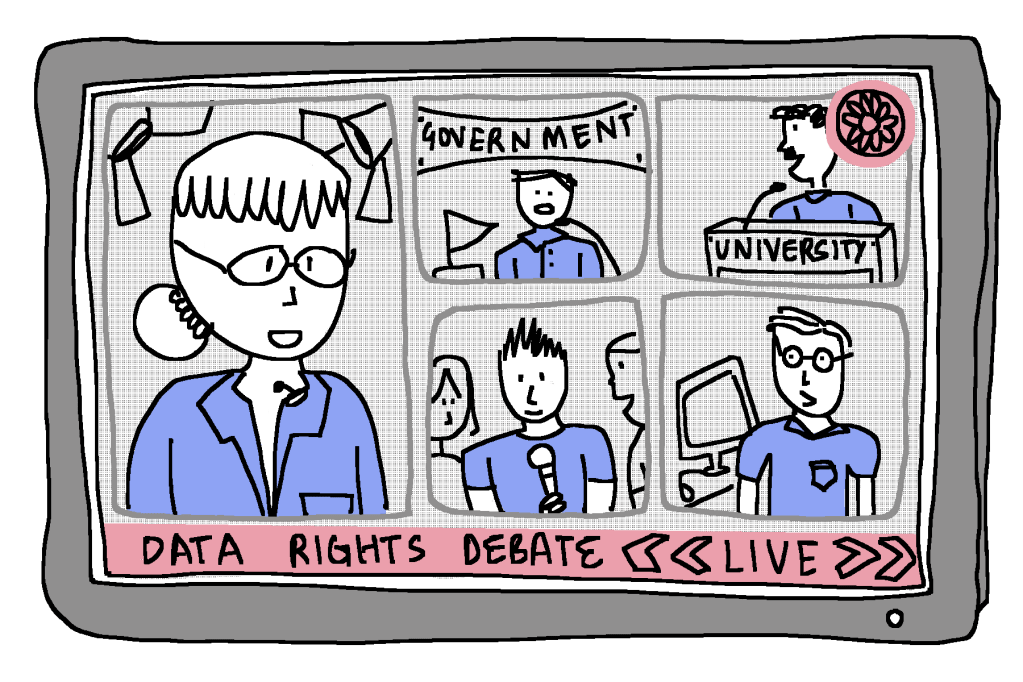

Data Rights Debate: Remote Proctoring in Education

AI-based remote proctoring is a technology-driven approach to monitoring and invigilating online exams. It involves the use of AI algorithms to assess and analyze various aspects of a test-taker’s behavior, facial expressions, and surroundings during an online examination. The goal is to ensure the integrity of the exam process by detecting any potential cheating or irregularities. This technology often incorporates features such as facial recognition, eye tracking, and behavior analysis to maintain the security and fairness of remote assessments.

The issue of remote proctoring in exams has sparked debates due to concerns about privacy invasion, potential algorithmic biases, and the collection of personal data. Balancing the need for exam integrity with safeguarding students’ data rights has become a central challenge, prompting discussions on fair assessment practices in online education.

Activity Overview: For this activity, you will carry out a mock TV news debate on the issue.

Moderation: This activity would be best suited to be carried out under the guidance of a moderator; this could be a teacher, a parent or a community leader.

Your roles will be:

TV Anchor: Facilitator of the debate, introduces the topic, assigns roles, and guides the discussion.

Government Representative: Defends the government’s stance on allowing remote proctoring, emphasizing its benefits and regulatory measures to protect data rights.

University Administrator: Advocates for the use of remote proctoring, highlighting the need for exam integrity and fair assessment in an online environment.

Student Activist: Represents the student community, expressing concerns about privacy invasion, algorithmic biases, and the impact on mental health.

Owner of Software: Represents the software used by educational institutes to implement remote proctoring of exams.

Activity Steps:

Research

Participants familiarize themselves with the background information and their assigned roles.

Role Preparation

Each participant prepares key points, arguments, and responses based on their stakeholder role

Mock Debate

Participants engage in a structured debate, with the TV Anchor guiding the flow of discussion. Each participant presents their perspective, responds to others, and raises questions.

Community Discussion

After the debate, open the floor for a community discussion where participants can share their personal opinions, thoughts, and reflections on the topic.

Discussion Points:

Privacy concerns: What are the potential risks associated with remote proctoring?

Equality issues: How can remote proctoring disproportionately affect certain groups of students?

Regulatory measures: What policies and regulations should be in place to safeguard data rights?

Alternatives: Are there alternative assessment methods that address both integrity concerns and data rights?

Reflection Room

Do you think the intentions of AI developers are always noble?

Do you think Big Tech is the ‘right’ actor to hold so much power? Why, or why not?

Related Modules

Other Innovator Modules