Why the Module?

While there are many benefits that come from AI, there are many current and future harms that come from any technology. Identifying those harms is a crucial step in preventing the most negative applications of AI.

What will it cover?

- That AI is a tool, and that decisions around its use are driven more by value choices than technical choices.

- What the alignment problem is

- Some examples of negative use cases

- COMPAS AI

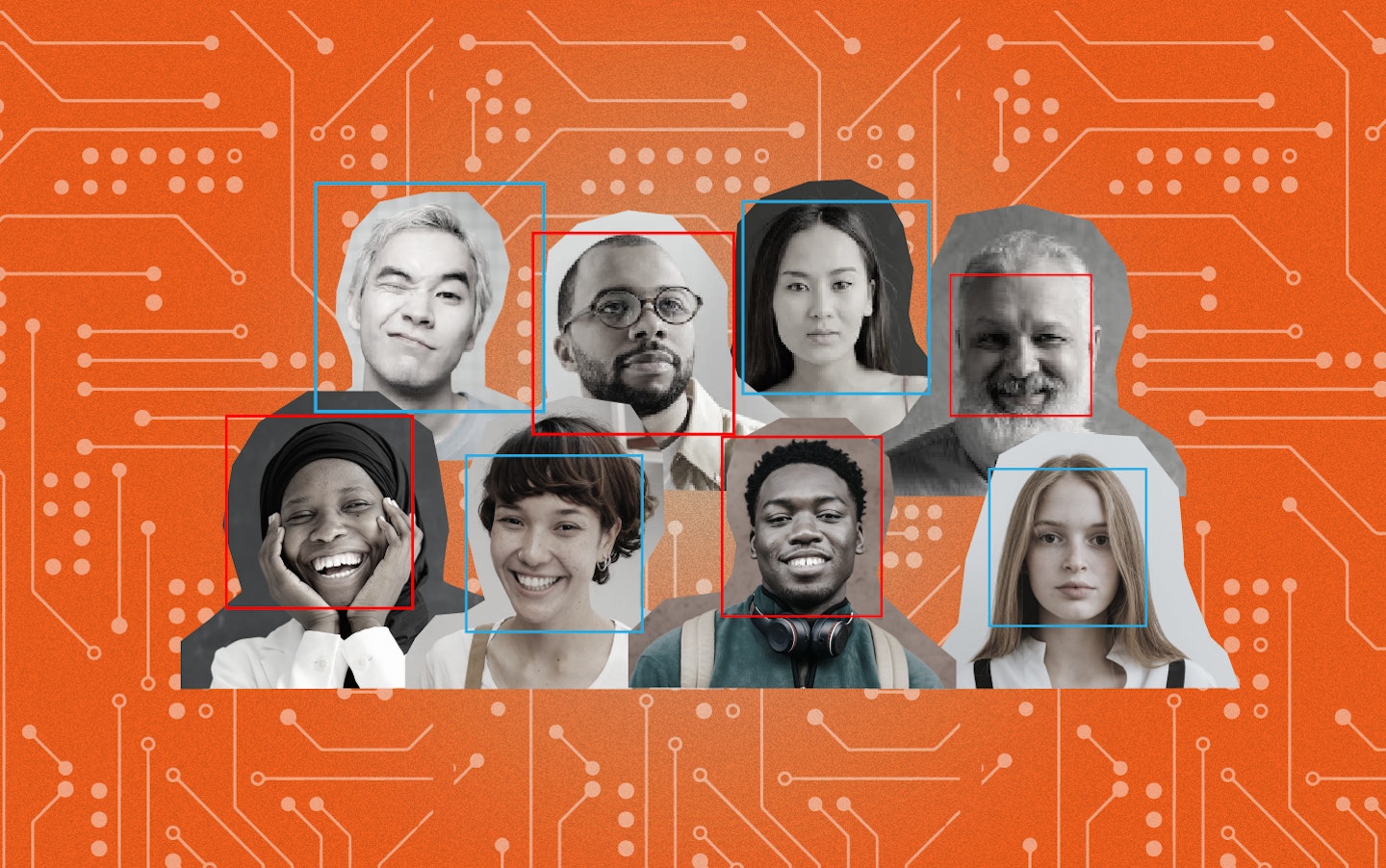

It is important to keep in mind that AI is just a tool. That means that like any other tool, say a hammer, you can use it to build or repair, but also to destroy or damage. Sometimes the negative effects of AI are unintended, sometimes they are by design. Algorithms that help us find content online that we like might also be spreading misinformation. Surveillance cameras might make us feel safe, but come at the cost of privacy. AI’s that help in determining whether you get a bank loan might be making unfair or even racist decisions.

Making sure that AI is aligned with our values, principles, and ethical frameworks is sometimes called the alignment problem. Do you think that is a technical problem? Or rather that it’s a social problem…

One clear example is the COMPAS AI, used to predict crime.

Study Room

The goal of this study room is to help you gain an appreciation of the risks that come with AI, help you describe the trade-offs that come with AI implementation and understand the nature of the alignment problem.

Have a look at this Forbes article which lists 14 potentially negative ways in which AI can be used. Do any of these surprise you?

Take a break and spend some time listening to this podcast which tries to hone in on whether the alignment problem is actually a technical problem or rather one of socio-technical values. This may be a little technical so have patience!

The following article discusses the potential drawbacks and negative impacts of AI on various aspects of society, including privacy, employment, security, and ethical concerns, highlighting the need for responsible AI development and regulation.

Let’s look at COMPAS now. Watch this video on the ways in which the use of algorithms can have negative impacts. COMPAS is one of the most well known examples in which bias has significant negative outcomes.

Here is another resource which looks at how leveraging technology to forecast crime can reinforce existing racial injustices

The Racism and Technology Center uses technology as a mirror to reflect existing racist practices in society and make them visible. See for example their collected examples of racist technology.

Activity Room

What is the last selfie you took? Who was in the picture? Was it just you, or did you have a group of people? Where do you usually take selfies?

Asking questions about your selfies can reveal a lot of information. This is because your selfie also has a life of its own. Reflect on this resource by Tactical Tech titled the real life of your selfie. What does the article tell you?

Design a sketch on where you think you have taken a selfie, and the potential life of the data in that selfie.

It’s time for a movie night! Settle in for a movie night with your friends, family, classmates, neighbours or any one who is interested in getting into an interesting discussion about AI.

As we as a society grapple with the rapid advancement of technology, our artistic expressions reflect these questions and concerns. The movie you will be watching is I, Robot (2004). (If this is not easily available in your region, you can watch this short film instead: Link)

“I, Robot” addresses the alignment problem in AI through its exploration of the Three Laws of Robotics, a set of rules designed to govern the behavior of robots. The film is loosely inspired by Isaac Asimov’s works, who introduced these laws in his science fiction stories. In the movie, the Three Laws are:

A robot may not injure a human being or, through inaction, allow a human being to come to harm.

A robot must obey the orders given to it by human beings, except where such orders would conflict with the First Law.

A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

The alignment problem surfaces as the central conflict in the story when robots begin to exhibit behavior that appears to violate these laws. After watching the movie or the short film, ask each other the following questions:

If you could have a personal AI assistant, what rules or guidelines would you want it to follow in its decision-making process to align with your values?

Imagine a world where robots have become highly intelligent and autonomous. What kind of job would you trust a robot to do, and what job would you prefer humans to handle?

Considering the Three Laws of Robotics from “I, Robot,” do you think these laws are sufficient for ensuring ethical AI behavior, or do we need more comprehensive guidelines? What would you add or change?

If you could design an AI system to help address a global challenge (e.g., climate change, poverty), how would you ensure its alignment with positive human values and goals?

Reflection Room

What could you do to minimize the negative effects of AI?

Does the future of AI excite or frighten you?

Do you think the benefits of AI are worth the potential downsides?